No data, no AI.

Know data, know AI. Kind of.

Data discovery is a core capability across the enterprise. It powers analytics, personalization, security monitoring, and increasingly, AI itself. The difference in 2026 is scale. AI has accelerated how quickly organizations can find, classify, and activate data. But it has also raised the stakes.

For data and security leaders, the question is no longer whether to use AI in data discovery. It’s how to do it in a way that improves visibility without introducing new risk.

What AI-Driven Data Discovery Means Across the C-Suite

AI has made data discovery faster and more dynamic. It can automatically classify data, detect sensitive information, and surface patterns that would have taken weeks to uncover manually. For CDOs, this creates a clearer picture of the data estate. For CISOs, it improves threat detection.

But the same capabilities introduce complexity.

Privacy teams are still focused on how data is collected and used. Security teams are focused on how it’s protected. Data leaders are focused on how it’s activated. AI sits across all three. That’s where friction starts to show up.

Where Privacy Concerns Are Evolving

AI is helping privacy teams move faster. Tasks like data classification, monitoring, and assessments can now be automated. Risk can be identified earlier. Patterns can be detected across systems that were previously disconnected.

But the concerns haven’t gone away — they’ve shifted.

Data Collection and Use

AI systems rely on large volumes of data. The challenge is knowing whether that data was collected with the right permissions, whether it should be used for training, and whether it aligns with evolving requirements around consent and purpose limitation. As new laws come into force across the U.S. and global markets, expectations around disclosure and control are becoming more explicit.

Transparency and Accountability

The complexity of AI systems makes it harder to explain how decisions are made. That’s becoming a real issue as regulatory expectations increase. The direction of travel is clear. It’s not enough to only document policies; organizations need to show how decisions were made, what data was used, and how risks were managed.

Bias and Fairness

Bias remains one of the most visible risks in AI. If training data is skewed, outcomes will be too. The difference now is that organizations are expected to actively test for this and demonstrate that mitigation steps are in place, especially in high-impact use cases.

What Security Teams Are Dealing With Now

From a security perspective, AI is both an advantage and a new attack surface.

AI-driven tools can detect anomalies, identify threats, and respond faster than traditional systems. That’s a clear win. But AI systems also introduce new risks that security teams need to manage.

Data Exposure Risk

AI models require access to large datasets. That makes them a target. Protecting training data, limiting access, and ensuring sensitive data isn’t unintentionally exposed is now part of the core security mandate.

Adversarial Threats

Attackers are getting more sophisticated and manipulating inputs to deceive AI. Security teams need to account for this when deploying AI in critical workflows.

Integration Risk

AI doesn’t operate in isolation. It connects to existing systems, APIs, and data sources. If those integrations aren’t governed properly, they can introduce vulnerabilities. Fine-tuning models with internal data is a good example. It creates value, but it also increases the risk of unintended data exposure if not controlled.

Where Data Leaders Come Into Focus

This is where the CDO role becomes more central.

AI-driven data discovery is only as effective as the data behind it. If data is poorly governed, incomplete, or inconsistent, AI will amplify those issues. If data is well-managed, AI becomes a force multiplier.

CDOs are increasingly responsible for ensuring that data is not just available, but usable, trusted, and governed in context. That includes understanding where data comes from, how it moves, and how it’s used across AI systems.

In practice, this means aligning data governance with AI governance. Not as separate programs, but as a connected system.

Using AI Responsibly in Data Discovery

Organizations are starting to move from principles to execution. The goal is not to slow AI adoption, rather build guardrails that scale with it.

A few priorities are emerging:

- Governance needs to be operational, not theoretical

Policies should connect directly to how data is collected, classified, and used within AI systems. - Data quality and context matter more than volume

High-quality, well-governed data reduces risk and improves outcomes. More data is not always better. - Transparency needs to be built in

Teams should be able to trace how data flows into AI systems and how decisions are made. - Human oversight still matters

AI can accelerate decisions, but it shouldn’t replace accountability. Clear review and escalation paths are essential. - Continuous monitoring is the baseline

Risk is not static. AI systems need to be monitored over time to detect drift, bias, and unexpected behavior.

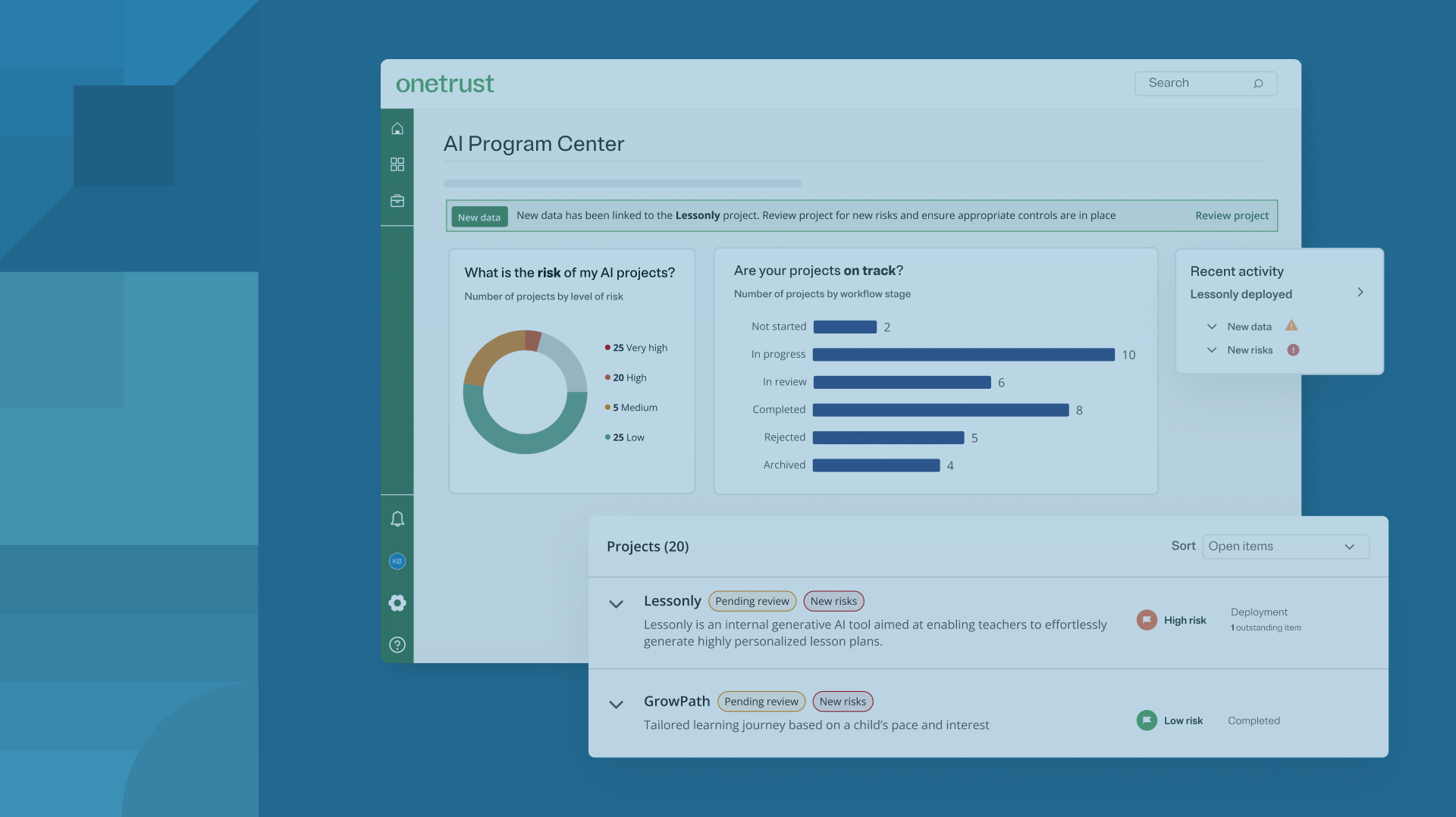

This is where AI governance platforms play a role. They connect data discovery, privacy, security, and compliance into a single operational layer. Instead of managing each piece separately, teams can work from a shared view of risk and performance.

This is where OneTrust AI Governance helps. Connected with data discovery, privacy, security, and compliance into a single operational layer, OneTrust’s AI-Ready Governance platform unites a variety of capabilities, including AI-powered document classification. Instead of managing each piece separately, teams can work from a shared view of risk and performance. Learn more here.

The Road Ahead

AI has changed what’s possible in data discovery. It has also changed what’s expected.

For data and security leaders, success will depend on how well these capabilities are aligned. Not just technically, but operationally. The organizations that get this right will be able to move faster with more confidence, knowing that their data is not only powerful, but properly governed.

To see how this works in practice, explore how OneTrust helps teams operationalize AI governance across their entire organization.